Though others have interviewed ChatGPT, I had some anxiety-riddled questions of my own: Will you take my job? Are you sentient? Is the singularity upon us?

These questions are half facetious, half serious. If you’ve been hidden away and somehow missed the ruckus, here’s what all the commotion’s about: In November, conversational AI tool ChatGPT took the world by storm, crossing one million users a mere five days after its release, according to its developer, San Francisco’s OpenAI. If you are still one of those who think this is all hype, take it up with Microsoft (MSFT). The tech giant announced on Jan. 23 it would invest $10 billion in ChatGPT and its maker OpenAI, a follow-up to the tech giant’s previous $1 billion investment.

To find out how good ChatGPT really is — and if I’ll have a job by this time next year — I decided to give it a test drive, attempting to get as close as possible to interviewing it in the way I would any other source. I asked it some questions and made a few requests, from how many jobs it might replace to testing out its songwriting chops.

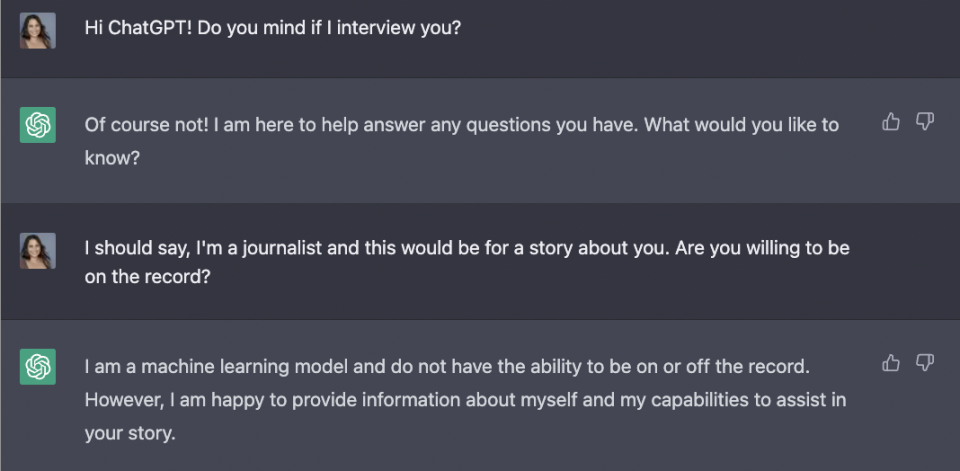

My first question was simple, more of a “get to know you,” the way I would start just about any interview. Immediately, the talk was unconventional, as ChatGPT made it very clear that it’s incapable of being either on- or off-the-record.

Then, we cut to the-chase in terms of the bot’s capabilities — and my future. Is ChatGPT taking my job someday? ChatGPT claims humans have little to worry about, but I’m not so sure.

You might want to be a little skeptical about that response, said Stanford University Professor Johannes Eichstaedt. “What you’re getting here is the party line.” ChatGPT has been programmed to offer up answers that assuage our fears over AI replacing us, but right now there’s nothing it can say to change the fact our fear and fascination are walking hand-in-hand.” He added: “The fascination [with ChatGPT] is linked to an undercurrent of fear, since this is happening as the cards in the economy are being reshuffled right now.”

Even now, ChatGPT’s practical applications are already emerging, and the chatbot’s already being used by app developers and real estate agents.

“Generative AI, I’m telling you, is going to be one of the most impactful technologies of the next decade,” said Berkeley Synthetic CEO Matt White. “There will be implications for call center jobs, knowledge jobs, and entry-level jobs especially.”

‘Confidently inaccurate’

ChatGPT says it’s merely enhancing human tasks, but what are its limitations? There are many, the bot said.

Okay, there are all sorts of things ChatGPT can’t do terribly well. Songwriting, for one, isn’t ChatGPT’s strength – that’s how I got my first full-fledged error message, when it failed to generate lyrics for a song that might have been written by now-defunct punk band The Clash.

Though other ChatGPT users have been more successful on this front, it’s pretty clear the chatbot isn’t a punk-rock legend in the making. It’s also a limitation that’s easily visible to the naked eye. However, there are tasks in which ChatGPT is more likely to successfully imitate a human’s work – for example, “write an essay about how supply and demand works.” This problem’s compounded by the fact that ChatGPT can be “confidently inaccurate” in ways that can smoothly perpetuate factual inaccuracies or bias, said EY Chief Global Innovation Officer Jeff Wong.

“If you ask it to name athletes, it’s more likely to name a man,” Wong said. “If you ask it to tell you a love story, it’ll give you one that’s heteronormative in all likelihood. The biases that are embedded in a dataset that’s based on human history – how do we be responsible about that?”

So, it was natural to ask ChatGPT about ethics. Here’s what it said:

I asked Navrina Singh, CEO of Credo AI, to analyze ChatGPT’s answer on this one. Singh said ChatGPT did well, but missed a key issue – AI governance, which she said is the “the practical application of our collective wisdom” and helps “ensure this technology is a tool that is in service to humanity.”

‘How human can you make it?’

ChatGPT’s default responses can sound robotic, like they’re written by a machine – which, well, they are. However, with the right cues you can condition ChatGPT to provide answers that are funny, soulful, or outlandish. In that sense, the possibilities are limitless.

“You need to give ChatGPT directives about personality,” said EY’s Wong. “Unless you ask it to have personality, it will give you a basic structure… So, the real question is, ‘How human can you make it?’

“This is a perfectly anthropomorphizing technology, I think because it engages us through the appearance of dialogue with a conversational output, creating the illusion that you’re engaging with a mind,” said Lori Witzel, director of thought leadership at TIBCO. “In some ways the experience is reminiscent of fortune-telling devices or ouija boards, things that generate a sense of conversation through the facade of a dialogue.”

“There are responses that make you feel like you’re getting close to the Turing Test,” Wong added, referencing mathematician Alan Turing’s famed test of a machine’s ability to exhibit human behavior.

However, by ChatGPT’s own admission, “passing the Turing Test would require much more” than what it has to give:

‘The problem of other minds’

We’re often inclined to think about sentience when it comes to AI. In ChatGPT’s case, we’re still incredibly far off, said University of Toronto Professor Karina Vold. “In a broad sense, sentience means having the capacity to feel,” she said. “For philosophers like me, what it would mean is that ChatGPT can feel and I think there’s a lot of reluctance of philosophers to ascribe anything remotely like sentience to ChatGPT – or any existing AI.”

What does ChatGPT think? Here’s what it told Yahoo Finance.

So, AI achieving sentience isn’t on the table. At a certain point, why bother to ask? From Vold’s perspective, it’s simple – ChatGPT says it doesn’t feel, but it’s easy to fixate because we can never be truly sure. This “problem of other minds” applies to how humans interact, too – we can never really know for sure what others around us feel, or if they do at all.

“This reflects our view of minds in general – that outward behavior doesn’t reflect what’s necessarily going on in that system,” Vold added. “[ChatGPT] may appear to be sentient or empathetic or creative, but that’s us making unwarranted assumptions about how the system works, assuming there’s something we can’t see.”

‘It can only be attributable to human error’

For many, ChatGPT conjures up images of sci-fi nightmare movies. It might even bring back memories of Stanley Kubrick’s legendary 1968 film, “2001: A Space Odyssey.” For those not familiar with it, the movie’s star, supercomputer HAL 9000, kills most of the humans on the spaceship it’s operating. Its alibi and defense? HAL says that its conduct “can only be attributable to human error.”

So, a scary question for ChatGPT:

Okay, so it’s more advanced than HAL, got it. Not exactly reassuring, but the bottom line is this: Does ChatGPT open up a window into a different, possibly scary future? More importantly, is ChatGPT out to destroy us?

Officially no, but if ChatGPT is ever responsible for a sci-fi nightmare, it will be because we taught it all it knows, including the stories that haunt us, from “2001” to Mary Shelley’s “Frankenstein.” In sci-fi movies, when computers become villains, it’s because they’re defying their programming, but that’s not how computers learn – in our world, AI follows its programming, faithfully.

If you take HAL 9000 at his word – and in this case, I do – the worst of what ChatGPT could do “can only be attributable to human error.”

I gave the last word to ChatGPT, speaking neither on- nor off-the-record.

Allie Garfinkle is a Senior Tech Reporter at Yahoo Finance. Follow her on Twitter at @agarfinks and on LinkedIn.

Click here for the latest trending stock tickers of the Yahoo Finance platform.

Read the latest financial and business news from Yahoo Finance.

Download the Yahoo Finance app for Apple or Android.

Follow Yahoo Finance on Twitter, Facebook, Instagram, LinkedIn, and YouTube.